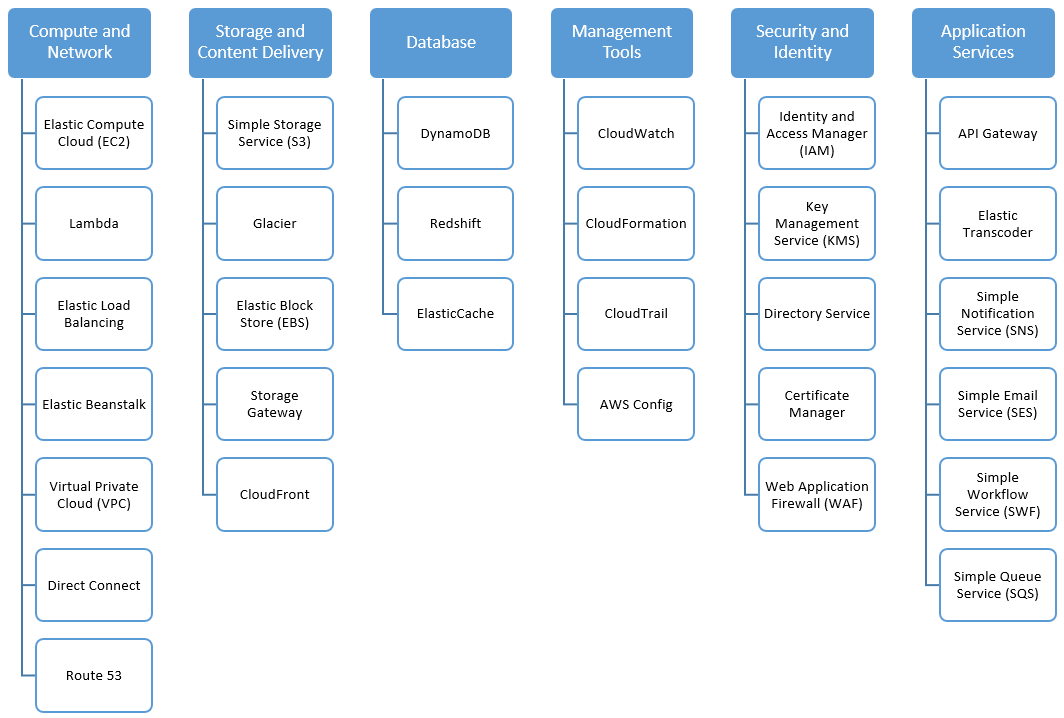

Amazon AWS

- AWS Fundamentals

- Storage and Content Delivery Service

- Compute and Network Services

- Database service

- DevOps & Management Tools

- Security and Identity

- Application Services

- Analytics

- Terminology

- References

AWS Fundamentals

- Cloud Computing - Deployment Models

- All-in cloud-based application is fully deployed in the cloud, with all components of the app running in the cloud

- Hybrid deployment is a common approach taken by many enterprises that connects infrastructure and apps between cloud-based resources and existing resources, typically in an existing data center.

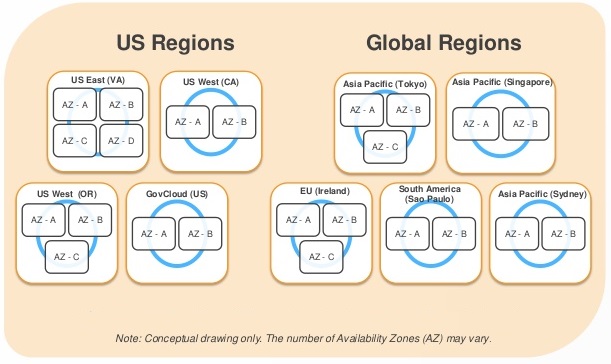

- Global Infrastructure

- Each region is a separate geographic area.

- Each region has multiple, isolated locations known as Availability Zones.

- Resources aren’t replicated across regions unless organizations choose to do so.

- For fault tolerance and stability, each region is completely isolated from each other.

- Each Availability Zone is also isolated, but they are connected through low-latency links.

- Each Availability Zone is located in lower-risk flood plains using a discrete UPS and on-site backup generators. They are each fed via different grids from independent utilities to reduce single points of failures further.

- Organizations retain complete control and ownership over the region in which their data is physically located, allowed them to meet regional compliance and data residency requirements.

- www.cloudping.info - to estimate the latency from your browser to each AWS region

- Accessing the platform

- via Amazon console

- via CLI

- via SDK

Storage and Content Delivery Service

Storage Basics

- Traditional Storage Types

- Block storage:

- operates at low-level - the raw storage device level - and manages data as a set of numbered, fixed-size blocks

- accessed over a network in the form of a Storage Area Network (SAN) using protocols such as iSCSI or Fiber channel

- very closely associated with the server and the OS

- E.g., Amazon Elastic Block Storage (EBS)

- File storage:

- operates at higher-level - the operating system level - and manages data as a named hierarchy of files and folders

- accessed over a network as a Network Attached Storage (NAS) file server or “filer” using protocols such as Common Internet File System (CIFS) or Network File System (NFS)

- very closely associated with the server and the OS

- E.g., AWS Elastic File System (EFS)

- Block storage:

- Cloud Storage

- Object storage

- Independent of a server and is accessed over the Internet.

- Instead of managing data as blocks or files using SCSI, CIFS or NFS protocols, data is managed as objects using REST API

- E.g., Amazon S3

- Object storage

S3 (Simple Storage Service)

S3 Overview

- S3 is a highly-durable and highly-scalable cloud object store

- By default, every object is world-readable

- Scalability: S3 automatically partitions buckets to support very high request rates and simultaneous access by many clients

- Data consistency

- S3 offers read-after-write consistency for

PUTs on new objects - otherwise offers eventual consistency

- S3 offers read-after-write consistency for

S3 Buckets

- Buckets are a simple flat folder with no file system hierarchy.

- Limitations

- It cannot have a sub-bucket within a bucket.

- Bucket name: max 63 lowercase letters, numbers, hyphens and periods

- Each bucket can hold an unlimited number of objects.

- Max 100 buckets per account.

S3 Objects

- Objects reside in containers called buckets

- An object can virtually store any kind of data in any formats

- Max object size = 5TB

- Each object has

- data (the file itself)

- metadata - set of name/value pairs

- System metadata - created and used by Amazon S3. e.g., last modified date, MD5, object size, etc

- User metadata - optional

- Object Key

- Each object is identified by a unique key (similar to a file name).

- Combination of

bucket + key + optional version IDuniquely identifies an S3 object - Limitations

- Max 1024 bytes of UTF-8 characters (including slashes, backslashes, dots, dashes)

- Keys must be unique within a single bucket

- You cannot incrementally update portions of the object as you would with a file

- ARNs (Amazon Resource Name) uniquely identify AWS resources. (AWS ARNs and Namespaces

- Object in an Amazon S3 bucket. e.g.,

arn:aws:s3:::my-family-photos-2017/birthday.jpgarn:aws:s3:::bucket_namearn:aws:s3:::bucket_name/key_name

- General formats

arn:partition:service:region:account-id:resourcearn:partition:service:region:account-id:resourcetype/resourcearn:partition:service:region:account-id:resourcetype:resource

- Object in an Amazon S3 bucket. e.g.,

S3 Durability & Availability

- Durability

- answers the question will my data still be there in the future?

- S3 provides 99.999999999% durability - meaning for 10K objects stored, you can on average expect to incur a loss of 1 object every 10,000,000 years

- Durability achieved by

- automatically storing data redundantly on multiple devices in multiple facilities within a region.

- design to sustain concurrent loss of data in 2 facilities without loss of user data

- highly durable infrastructure

- Availability

- answers the question can I access my data right now?

- S3 provides 99.99% availability

- S3 is an eventually consistent system

- S3 offers read-after-write consistency for

PUTs to new objects. Read-after-write guarantees immediate visibility of new data to all clients. PUTs to existing objects andDELETEs are offered at eventual consistency- Updates to a single key are atomic - for eventual-consistency reads, you will get the new data or the old data, but never an inconsistent mix of data.

- S3 offers read-after-write consistency for

S3 Storage Classes

- Hot - frequently accessed data

- Warm - less frequently accessed data as it ages

- Cold - long-term backup or archive data before eventual deletion

| S3 Standard | S3 Standard - Infrequent Access (IA) | Reduced Redundancy Storage (RRS) | Amazon Glacier | |

|---|---|---|---|---|

| Well-suited for | short-term or long-term storage of frequently accessed data | long-lived, less frequently accessed data that is stored for longer than 30 days | derived data that can be easily reproduced (e.g., thumbnails) | data that does not require real-time access, such as archives and long-term backups, where a retrieval time of several hours is suitable |

| Offers | high durability (99.999999999%), high availability, high performance, first low-byte latency, high throughput |

same durability, low latency, high throughput |

slightly lower durability (99.9999%) | secure, high durability (99.999999999%) |

| Cost | Has lower per GB-month cost than Standard Price model also includes a minimum object size (128KB), minimum duration (30 days), and per-GB retrieval costs. |

Reduced cost than Standard or Standard-IA | Extremely low-cost |

- Reduce storage costs by automatically transitioning data from one storage class to another or eventually deleting data after a period of time. For example,

- Store backup data initially in S3 Standard

- After 30 days, move to Standard-IA

- After 90 days, transition to Glacier,

- After 3 years, delete

- Lifecycle configurations are attached to bucket or specific objects

S3 Data Protection

- Bucket-level permissioning - existing permissioning policies can be copied or generated using ‘Bucket policy generator’

- By default, all S3 objects and buckets are private and can only be accessed by the owner.

- Access Control

- S3 Access Control Lists (ACLs) (Coarse-grained access controls)

- legacy mechanism, created before IAM

- Limited to read, write, or full-control at object/bucket level

- Best used for enabling bucket logging or making a bucket that hosts a static website be readable

- S3 bucket policies (Fine-grained access controls)

- Recommended access control mechanism

- Can specify

- who can access the bucket

- from where (by CIDR block or IP address)

- at what time of the day

- Bucket policies allows to assign cross-account access to S3 resources

- Similar to IAM but

- associated with bucket resource instead of an IAM principal

- includes an explicit reference to the IAM principal in the policy. This principal can be associated with a different AWS account

- AWS Identity and Access Management (IAM) policies

- Query String Authentication

- S3 Access Control Lists (ACLs) (Coarse-grained access controls)

- Encryption

- Encrypt data in-flight

- Transmit data using HTTPS protocol to SSL API endpoints

- Encrypt data at rest

- Server-Side Encryption (SSE)

- S3 encrypts data at the object level as it writes it to disks and decrypts it for you when you access it

- SSE-S3 (AWS Managed Keys)

- AWS handles the key management and key protection

- Every object is encrypted with a unique key

- The object encryption key is then encrypted using a separate master key

- A new master key is issued at least monthly with AWS rotating the keys.

- Encrypted data, keys and master keys are all stored separately on secure hosts

- SSE-KMS (AWS KMS Keys)

- AWS handles management and protection of your key

- SSE-KMS offers additional benefits

- provides audit of who used the key to access, which object, when.

- Allows to view failed attempts to access data from users who did not have permissions

- SSE-C (Customer Provided Keys)

- You maintain your own encryption keys, but don’t want to manage or implement your own client-side encryption library

- AWS will do the encryption/decryption of your objects while you maintain full control of the keys

- Client-Side Encryption (CSE)

- You encrypt the data before sending it to S3

- 2 options for using data encryption keys

- Use an AWS KMS-managed customer master key

- Use a client-side master key

- Server-Side Encryption (SSE)

- Encrypt data in-flight

| who creates the key? | who protects and manages the key? | who encrypts and decrypts data? | |

|---|---|---|---|

| SSE-S3 | AWS | AWS | AWS |

| SSE-KMS | You | AWS | AWS |

| SSE-C | You | You | AWS |

| CSE | You | You | You |

- Versioning

- If an object is accidentally changed/deleted, one can restore the object to its original state by referencing the version ID in addition to the bucket and object key

- Versioning is turned on at the bucket level. Once enabled, versioning cannot be removed from a bucket; it can only be suspended.

- Multi-Factor Authentication (MFA) Delete

- requires additional authentication in order to permanently delete an object version or change the versioning state of a bucket.

- MFA delete can only be enabled by the root account

- Pre-Signed URLs

- All S3 objects are by default private.

- Owner can share objects with others by creating pre-signed URL, using their own security credentials to grant time-limited permission to download the objects.

- To create a pre-signed URL, you must provide: your security credentials, bucket name, object key, the HTTP method (GET for download) and an expiration date and time.

- Useful to protect against ‘content scraping’ of web content such as media files stored in S3

Advanced Topics

- Multipart Upload

- To support uploading/copying large objects

- Allows to pause and resume. Has ability to upload objects where the size is initially unknown

- Object size > 100 MB - you should use multipart upload

- Object size > 5 GB - you must use multipart upload

- Using high-level APIs and high-level S3 command in CLI (

aws s3 cp,aws s3 mv,aws s3 sync), multipart upload is automatically performed for large objects - Using low-level APIs, you must break the file into parts and keep track of the parts.

- If a multipart upload is incomplete after specified number of days, you can set an object lifecycle policy on a bucket to abort to minimize the storage costs.

- Range GETs

- is used to download (GET) only a portion of an object in S3 or Glacier.

- Cross-Region Replication

- allows to asynchronously replicate all new objects from one region to another.

- Metadata and ACLs of the object also is replicated.

- Only new objects are replicated. Existing objects must be copied via a separate command.

- To enable Cross-Region Replication, versioning must be turned-on in both source and target buckets.

- An IAM policy must be used to give S3 permission to replicate objects on your behalf.

- Commonly used to reduce the latency to access objects by placing them closer to a set of users or store backup data at a certain distance from the original source data

- Logging

- Logging is off by default. While enabling, you must choose the bucket where the logs will be stored.

- Event Notifications

- Set up at bucket level to notify when an object is created, removed or lost in RRS

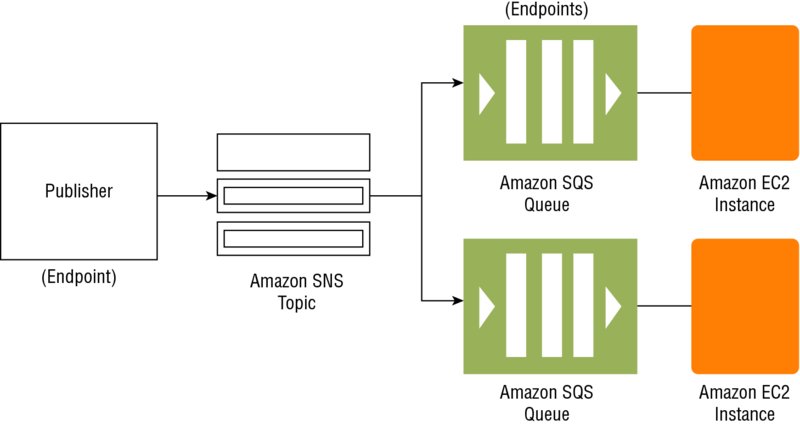

- Notification messages can be sent to Amazon SQS or SNS or AWS Lambda

Amazon Glacier

- Extremely low-cost storage service for data archiving and long-term backup. Optimized for infrequently accessed data where a retrieval time of several hours is suitable.

- To retrieve an object, issue a

restorecommand using S3 API; 3 to 5 hours later, it is copied to S3 RRS.Restoresimply creates a copy; the original data is retained in Glacier until explicitly deleted. - Archives

- Data is stored in archives

- Max 40 TB of data per archive

- Unlimited archives can be created

- Each archive is assigned a unique archive ID at the creation time

- Archives are automatically encrypted

- Archives are immutable

- Vaults

- Vaults are containers for archives.

- Max 1000 vaults per account

- Control access to vaults using IAM policies or vault access policies

- Vault lock policy: can specify controls such as Write Once Read Many (WORM) in a vault local policy to lock the policy from future edits.

- Retrieving up to 5% of stored data is free per month.

Amazon Elastic Block Store (EBS)

- Persistent block-level storage volumes for use with EC2 instances. Each EBS volume is automatically replicated within its Availability Zone to protect from component failure, offering HA and durability.

- Each EBS volume is automatically replicated within its Availability Zone

- Each EBS volume can be attached to a single EC2 instances

- A single EC2 instance can be attached to one or more EBS volumes

EBS Volume Types

| Magnetic Volume | General-Purpose SSD | Provisioned IOPS SSD | Throughput-Optimized HDD Volumes | Cold HDD Volumes | |

|---|---|---|---|---|---|

| Use Cases | Workloads where data is accessed infrequently, Sequential reads, Situations where low-cost storage is a requirement | System boot volumes, Virtual Desktops, small-to-medium size databases, Dev to Test environments | I/O intensive workloads, particularly large database workloads, Critical business apps that require sustained IOPS performance or more than 10,000 IOPS or 160 MB of throughput per volume |

Low-cost HDD volumes designed for frequent-access, throughput-intensive workloads such as big data, data warehouses, and log processing. | Designed for less frequently accessed workloads, such as colder data requiring fewer scans per day. |

| Volume Size | 1 GB to 1 TB | 1 GB to 16 TB | 4 GB to 16 TB | up to 16 TB | Max 16 TB |

| Max throughput | 40 - 90 MB | 160 MB | 320 MB | 500 MB/s | 250 MB/s |

| IOPS Performance | Average 100 IOPS with the ability to burst to 100s of IOPS | Baseline performance of 3 IOPS/GB (max 10,000 IOPS) with the ability to burst to 3000 IOPS for volumes under 1000 GB | Consistently performs at provisioned level, up to 20,000 IOPS max | max 500 | Max 250 |

| Cost | Cheapest | Based on the volume size provisioned (not space used) + number of IOPS provisioned, whether they are consumed or not | significantly less expensive than general-purpose SSD volumes. | significantly less expensive than Throughput-Optimized HDD volumes | |

| Misc | Lowest Performance | When you provision a Provisioned IOPS SSD volume, you should specify both the size and the desired number of IOPS You can stripe multiple volumes together in a RAID 0 configuration for larger size and greater performance |

EBS-Optimized Instances

- Uses an optimized configuration stack and provides additional, dedicated capacity for EBS I/O. This optimization provides the best performance for EBS volumes by minimizing contention between EBS I/O and other traffic from EC2 instance.

EBS Snapshots

- Backup EBS volume data by taking snapshots through AWS console, CLI, API or setting up a schedule for regular snapshots

- Snapshots are incremental backups, which means that only the blocks on the device that have changed since your most recent snapshot are saved.

- Snapshot data is stored in S3.

- The action of taking a snapshot is free. You pay only the storage costs for the snapshot data.

- When you request a snapshot, the point-in-time snapshot is created immediately and the volume may continue to be used, but the snapshot may remain in pending status until all the modified blocks have been transferred to S3.

- Snapshots are stored in AWS-controlled S3 storage; not in your account’s S3 buckets. This means you cannot manipulate them like other Amazon S3 objects. Rather, you must use the EBS snapshot features to manage them.

- Snapshots are constrained to the region in which they are created, meaning you can use them to create new volumes only in the same region. If you need to restore a snapshot in a different region, you can copy a snapshot to another region.

Creating a Volume from a Snapshot

- To use a snapshot, you create a new EBS volume from the snapshot. When you do this, the volume is created immediately but the data is loaded lazily. This means that the volume can be accessed upon creation, and if the data being requested has not yet been restored, it will be restored upon first request. Because of this, it is a best practice to initialize a volume created from a snapshot by accessing all the blocks in the volume.

- Snapshots can also be used to increase the size of an EBS volume. To increase the size of an EBS volume

- take a snapshot of the volume

- create a new volume of the desired size from the snapshot

- Replace the original volume with the new volume.

Recovering Volumes

- If an Amazon EBS-backed instance fails and there is data on the boot drive, it is relatively straightforward to detach the volume from the instance.

- Unless the

DeleteOnTerminationflag for the volume has been set to false, the volume should be detached before the instance is terminated. - The volume can then be attached as a data volume to another instance and the data read and recovered.

Encryption Options

- Amazon EBS offers native encryption on all volume types

- When you launch an encrypted EBS volume, Amazon uses the AWS KMS to handle key management. A new master key will be created unless you select a master key that you created separately in the service.

- Data and associated keys are encrypted using the industry-standard AES-256 algorithm.

- The encryption occurs on the servers that host EC2 instances, so the data is actually encrypted in transit between the host and the storage media and also on the media.

- Encryption is transparent, so all data access is the same as unencrypted volumes, and you can expect the same IOPS performance on encrypted volumes as you would with unencrypted volumes, with a minimal effect on latency.

- Snapshots that are taken from encrypted volumes are automatically encrypted, as are volumes that are created from encrypted snapshots.

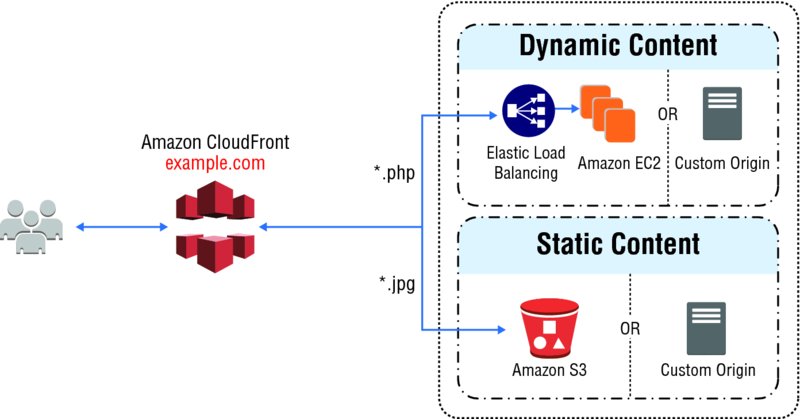

Amazon CloudFront

- is a global CDN service

- can be used to deliver websites including dynamic, static, streaming and interactive content, using a global network of edge locations. Request for content are automatically routed to the nearest edge location for best performance.

-

Edge caching refers to the use of caching servers to store content closer to end users. For instance, if you visit a popular Web site and download some static content that gets cached, each subsequent user will get served that content directly from the caching server until it expires.

- CDN Overview

- A CDN is a globally distributed network of caching servers that speed up the downloading of web pages and other content.

- CDNs use DNS geo-location to determine the geographic location of each request for a web page or other content, then they serve that content from edge caching servers closest to that location instead of the original web server.

- A CDN allows you to increase the scalability of a website or mobile application easily in response to peak traffic spikes.

- In most cases, using a CDN is completely transparent—end users simply experience better website performance, while the load on your original website is reduced.

- When a user requests content that you’re serving with CloudFront, the user is routed to the edge location that provides the lowest latency (time delay), so content is delivered with the best possible performance. If the content is already in the edge location with the lowest latency, CloudFront delivers it immediately. If the content is not currently in that edge location, CloudFront retrieves it from the origin server, such as an S3 bucket or a web server, which stores the original, definitive versions of your files.

- CloudFront is optimized to work with other AWS cloud services as the origin server, including S3 buckets, S3 static websites, EC2, and ELB.

- CloudFront also works seamlessly with any non-AWS origin server, such as an existing on-premises web server.

- CloudFront also integrates with Route 53.

- CloudFront supports all content that can be served over HTTP or HTTPS.

- CloudFront supports serving both static and dynamic web pages.

- CloudFront supports media streaming, using both HTTP and RTMP.

3 Core Concepts

- Distributions

- To use CloudFront, you start by creating a distribution, which is identified by a DNS domain name such as

d111111abcdef8.cloudfront.net. - To serve files from CloudFront, you simply use the distribution domain name in place of your website’s domain name; the rest of the file paths stay unchanged.

- You can use the CloudFront distribution domain name as-is, or you can create a user-friendly DNS name in your own domain by creating a CNAME record in Route 53 or another DNS service. The CNAME is automatically redirected to your CloudFront distribution domain name.

- To use CloudFront, you start by creating a distribution, which is identified by a DNS domain name such as

- Origins

- When you create a distribution, you must specify the DNS domain name of the origin—the S3 bucket or HTTP server—from which you want CloudFront to get the definitive version of your objects (web files)

- Cache Control

- Once requested and served from an edge location, objects stay in the cache until they expire or are evicted to make room for more frequently requested content.

- By default, objects expire from the cache after 24 hours.

- Once an object expires, the next request results in CloudFront forwarding the request to the origin to verify that the object is unchanged or to fetch a new version if it has changed.

- Optionally, control how long objects stay in an CloudFront cache before expiring.

- Cache-Control headers: set by your origin server, or

- TTL: set the minimum, maximum, and default Time to Live (TTL) for objects in your CloudFront distribution.

- Invalidation Feature

- can also remove copies of an object from all CloudFront edge locations at any time by calling the invalidation API.

- removes the object from every CloudFront edge location regardless of the expiration period you set for that object on your origin server.

- The invalidation feature is designed to be used in unexpected circumstances, such as to correct an error or to make an unanticipated update to a website, not as part of your everyday workflow.

- Versioning

- Instead of invalidating objects manually or programmatically, it is a best practice to use a version identifier as part of the object (file) path name. For example:

- Old file:

assets/v1/css/narrow.css - New file:

assets/v2/css/narrow.css

- Old file:

- When using versioning, users always see the latest content through CloudFront when you update your site without using invalidation.

- Old versions will expire from the cache automatically.

- Instead of invalidating objects manually or programmatically, it is a best practice to use a version identifier as part of the object (file) path name. For example:

Advanced Features

- Cache Behaviors

- Cache behaviors allows to

- To use more than one origin server

- control which requests are served by which origin

- how requests are cached

- A cache behavior lets you configure a variety of CloudFront functionalities for a given URL path pattern for files on your website.

- E.g., One cache behavior applies to all PHP files in a web server (dynamic content), using the path pattern

*.php, while another behavior applies to all JPEG images in another origin server (static content), using the path pattern*.jpg. - Each cache behavior includes the following:

- The path pattern

- Which origin to forward your requests to

- Whether to forward query strings to your origin

- Whether accessing the specified files requires signed URLs

- Whether to require HTTPS access

- The amount of time that those files stay in the CloudFront cache (regardless of the value of any

Cache-Controlheaders that your origin adds to the files)

- Cache behaviors are applied in order; if a request does not match the first path pattern, it drops down to the next path pattern. Normally the last path pattern specified is

*to match all files.

- Cache behaviors allows to

- Whole Website

- Using cache behaviors and multiple origins, you can easily use CloudFront to serve your whole website and to support different behaviors for different client devices.

- Private Content

- In many cases, you may want to restrict access to content in CloudFront to only selected requestors, such as paid subscribers or to applications or users in your company network.

- CloudFront provides several mechanisms to allow you to serve private content.

- Signed URLs: Use URLs that are valid only between certain times and optionally from certain IP addresses.

- Signed Cookies: Require authentication via public and private key pairs.

- Origin Access Identities (OAI): Restrict access to an S3 bucket only to a special CloudFront user associated with your distribution. This is the easiest way to ensure that content in a bucket is only accessed by CloudFront.

Use Cases

Good Use Cases

- Serving the Static Assets of Popular Websites

- Serving a Whole Website or Web Application

- Serving Content to Users Who Are Widely Distributed Geographically

- Distributing Software or Other Large Files

- Serving Streaming Media

Inappropriate Use Cases

- All or Most Requests Come From a Single Location: If all or most of your requests come from a single geographic location, such as a large corporate campus, you will not take advantage of multiple edge locations.

- All or Most Requests Come Through a Corporate VPN: Similarly, if your users connect via a corporate VPN, even if they are distributed, user requests appear to CloudFront to originate from one or a few locations.

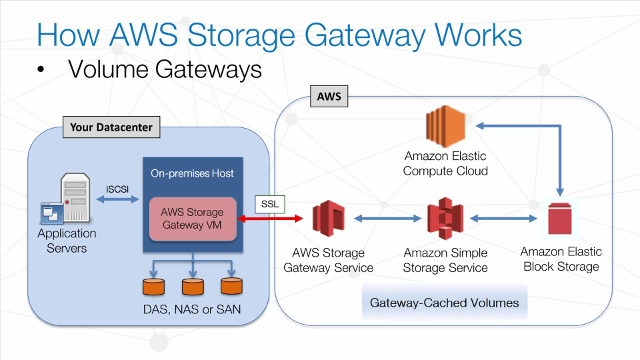

AWS Storage Gateway

- AWS Storage Gateway is a service to provide seamless and secure integration between an organization’s on-premises IT environment and AWS storage infrastructure.

- supports industry-standard storage protocols that work with your existing applications.

- It provides low-latency performance by caching frequently accessed data on-premises while encrypting and storing all of your data in S3 or Glacier.

- AWS Storage Gateway’s software appliance is available for download as a VM image that you install on a host in your data center and then register with your AWS account through the AWS Management Console. The storage associated with the appliance is exposed as an iSCSI device that can be mounted by your on-premises applications.

|

|

3 configurations for AWS Storage Gateway

- Gateway-Cached Volumes

- allows to expand local storage capacity into S3.

- All data stored on a Gateway-Cached volume is moved to S3, while recently read data is retained in local storage to provide low-latency access.

- Max volume size = 32TB

- Max number volumes per gateway = 32 (max storage = 32TB * 32 = 1PB)

- Backup

- Point-in-time snapshots can be taken to back up your AWS Storage Gateway- snapshots are performed incrementally, and only the data that has changed since the last snapshot is stored.

- All volume data and snapshot data is transferred to S3 over encrypted SSL connections - encrypted at rest in S3 using SSE.

- Data cannot be directly accessed with the S3 API or other tools such as the S3 console; instead you must access it through the AWS Storage Gateway service.

- Gateway-Stored Volumes

- allows to store on-premises storage to S3 and asynchronously back up that data to S3.

- provides low-latency access to all data, while also providing off-site backups taking advantage of the durability of S3.

- While each volume is limited to a maximum size of 16TB, a single gateway can support up to 32 volumes for a maximum storage of 512TB.

- Max volume size = 16TB

- Max number of volumes per gateway = 32 (max storage = 16TB * 32 = 512TB)

- Backup

- The data is backed up in the form of EBS snapshots.

- Point-in-time snapshots can be taken to back up your AWS Storage Gateway.

- Gateway stores the snapshots in S3 as EBS snapshots. When a new snapshot is taken, only the data that has changed since last snapshot is stored.

- Snapshots can be initiated on a scheduled or one-time basis.

- Because these snapshots are stored as EBS snapshots, a new EBS volume can be created from a Gateway-Stored volume.

- All volume data and snapshot data is transferred to S3 over encrypted SSL connections - encrypted at rest in S3 using SSE.

- Data cannot be directly accessed with the S3 API or other tools such as the S3 console.

- If your on-premises appliance or even entire data center becomes unavailable, the data in AWS Storage Gateway can still be retrieved.

- If it’s only the appliance that is unavailable, a new appliance can be launched in the data center and attached to the existing AWS Storage Gateway.

- A new appliance can also be launched in another data center or even on an EC2 instance on the cloud.

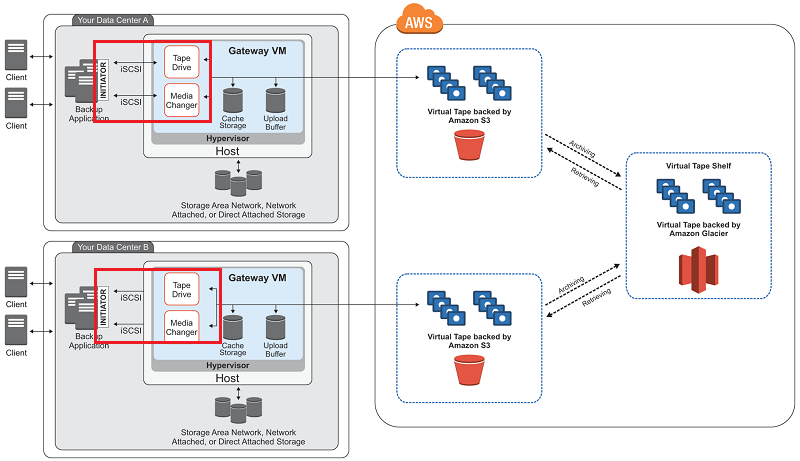

- Gateway Virtual Tape Libraries (VTL)

- Gateway-VTL offers a durable, cost-effective solution to archive your data on the AWS cloud.

- The VTL interface lets you leverage your existing tape-based backup application infrastructure to store data on virtual tape cartridges that you create on your Gateway-VTL.

- A virtual tape is analogous to a physical tape cartridge, except the data is stored on the AWS cloud.

- Tapes are created blank through the console or programmatically and then filled with backed up data.

- Virtual tapes appear in your gateway’s VTL, a virtualized version of a physical tape library. Virtual tapes are discovered by your backup application using its standard media inventory procedure.

- When your tape software ejects a tape, it is archived on a Virtual Tape Shelf (VTS) and stored in Glacier.

- Max number of tapes per gateway = 1,500 (max storage = 1 PB)

- Max VTS allowed per region = 1 VTS

- Multiple gateways in the same region can share a VTS.

Use Cases

- Gateway-Cached volumes enable you to expand local storage hardware to S3, allowing you to store much more data without drastically increasing your storage hardware or changing your storage processes.

- Gateway-Stored volumes provide seamless, asynchronous, and secure backup of your on-premises storage without new processes or hardware.

- Gateway-VTLs enable you to keep your current tape backup software and processes while storing your data more cost-effectively and simply on the cloud.

Compute and Network Services

EC2

Instance Types

- 2 key concepts to an instance:

- the amount of virtual hardware dedicated to the Instance

- the software loaded on the instance

- Instance types vary in following dimensions:

- Virtual CPUs (vCPUs)

- Memory

- Storage

- Network performance

- Instance types are grouped into families

- The ratio of vCPUs to memory is constant as the size scale linearly

- The hourly price for each size scales linearly. e.g.,

Cost(m4.xlarge) = 2 x Cost(m4.large) - Optimized Instance Type Family

m4family provides a balance of compute, memory and n/w resourcesc4compute optimized for workloads requiring significant processingr3memory optimized for memory-intensive workloadsi2storage optimized for workloads requiring high amounts of fast SSD storageg2GPU-based instances for graphics and general-purpose GPU compute workloads

- Network performance

- AWS publishes a relative measure of n/w performance: low, moderate, high

- Enhanced Networking:

- For workloads requiring greater n/w performance, use instance types supporting enhanced networking.

- It reduces the impact of virtualization on n/w performance enabling a capability called Single Root I/O Virtualization (SR-IOV)

- This results in more packets per second (PPS), lower latency and less jitter

Amazon Machine Image (AMI)

- defines the initial software that will be on an instance when it is launched: OS + initial state of patches + application/system software

- All AMIs are based on x86 OS (either Linux or Windows)

- 4 Sources of AMIs

- Published by AWS: you should apply patches upon launch

- AWS Marketplace

- Generated from Existing instances: published AMI + corporate standard software added

- Uploaded Virtual Servers: Using AWS VM Import/Export to create images from formats: VHD, VMDK, OVA

Addressing an Instance

There are several ways that an instance may be addressed over the web upon creation:

- Public DNS Name

- DNS name is generated automatically and cannot be specified by the customer.

- This DNS name persists only while the instance is running and cannot be transferred to another instance.

- Public IP

- This IP address is assigned from the addresses reserved by AWS and cannot be specified.

- This IP address is unique on the Internet, persists only while the instance is running, and cannot be transferred to another instance.

- Elastic IP

- An elastic IP address is an address unique on the Internet that you reserve independently and associate with an instance.

- This IP address persists until the customer releases it and is not tied to the lifetime or state of an individual instance.

- Because it can be transferred to a replacement instance in the event of an instance failure, it is a public address that can be shared externally without coupling clients to a particular instance.

Private IP addresses and Elastic Network Interfaces (ENIs) are additional methods of addressing instances that are available in the context of an VPC.

Securing an Instance

Initial Access

- EC2 uses public-key cryptography to encrypt and decrypt login information.

- Public-key cryptography uses a public key to encrypt a piece of data and an associated private key to decrypt the data. These two keys together are called a key pair.

- Key pairs can be created through the AWS Management Console, CLI, or API, or customers can upload their own key pairs.

- AWS stores the public key in

~/.ssh/authorized_keysfolder - Private keys are kept by the customer. The private key is essential to acquiring secure access to an instance for the first time.

- When launching a Windows instance, EC2 generates a random password for the local administrator account and encrypts the password using the public key. Initial access to the instance is obtained by decrypting the password with the private key, either in the console or through the API.

Virtual Firewall Protection

- AWS allows you to control traffic in and out of your instances through virtual firewalls called security groups.

- Security groups allow you to control traffic based on port, protocol, and source/destination.

- Security groups are applied at the instance level, as opposed to a traditional on-premises firewall that protects at the perimeter. To breach a single perimeter to access all instances, one has to breach the security groups repeatedly for each individual instances

- Security groups have different capabilities :

- EC2-Classic Security Groups - Control outgoing instance traffic

- VPC Security Groups - Control outgoing and incoming instance traffic

- Every instance must have at least one security group but can have more

- Changing Security Group

- If an instance is running in an VPC, you can change which security groups are associated with an instance while the instance is running.

- For instances outside of an VPC (called EC2-Classic), the association of the security groups cannot be changed after launch.

- A security group is default deny - it does not allow any traffic that is not explicitly allowed by a security group rule.

- A rule is defined by 3 attributes:

- Port

- Protocol. e.g., HTTP, RDP, etc.

- Source/Destination- can be defined in 2 ways:

- CIDR block

- Security group — Includes any instance that is associated with the given security group. This helps prevent coupling security group rules with specific IP addresses

- When an instance is associated with multiple security groups, the rules are aggregated and all traffic allowed by each of the individual groups is allowed. For example, if security group A allows RDP traffic from 72.58.0.0/16 and security group B allows HTTP and HTTPS traffic from 0.0.0.0/0 and your instance is associated with both groups, then both the RDP and HTTP/S traffic will be allowed in to your instance.

- A security group is a stateful firewall; that is, an outgoing message is remembered so that the response is allowed through the security group without an explicit inbound rule being required.

Lifecycle of Instances

Bootstrapping

- Ability to configure instances and install applications programmatically when an instance is launched. The process of providing code to be run on an instance at launch is called bootstrapping.

- When an instance is launched for the first time, a string-valued parameter

UserDatais passed to the OS to be executed as part of the launch process. On Linux, this can be a shell script, performing tasks like- Applying patches and updates to the OS

- Installing application software

- Copying a longer script or program from storage to be run on the instance

- Installing Chef or Puppet and assigning the instance a role so the configuration management software can configure the instance

UserDatais stored with the instance and is not encrypted. DO NOT include any secrets such as passwords or keys in it.

VM Import/Export

- Import VMs from your existing environment as an EC2 instance and export them back to your on-premises environment.

- You can only export previously imported EC2 instances. Instances launched within AWS from AMIs cannot be exported.

Instance Metadata

- Instance metadata is data about your instance that you can use to configure or manage the running instance. This is unique in that it is a mechanism to obtain AWS properties of the instance from within the OS without making a call to the AWS API.

- An HTTP call to http://169.254.169.254/latest/meta-data/ will return the top node of the instance metadata tree.

- Instance metadata includes:

- The associated security groups

- The instance ID

- The instance type

- The AMI used to launch the instance

Instance Tags

- Tags are key/value pairs you can associate with your instance or other service.

- Tags can be used to identify attributes of an instance like project, environment (dev, test, and so on), billable department, and so forth.

- Max 10 tags per instance.

Modifying Instance Type

- If the compute needs prove to be higher or lower than expected, the instances can be changed to a different size more appropriate to the workload.

- Stop instance -> Change instance type -> Restart instance

Termination Protection

- To prevent accidental termination, when Termination Protection is enabled, calls to terminate the instance will fail.

- It does not prevent termination triggered

- by an OS shutdown command,

- termination from an Auto Scaling group, or

- termination of a Spot Instance due to Spot price changes

Instance Pricing Options

- On-Demand Instances

- Least cost-effective due to the flexibility it allows customers to save by provisioning a variable level of compute for unpredictable workloads

- The price is per hour for each instance type

- No up-front commitment, and the customer has control over when the instance is launched and when it is terminated

- Reserved Instances

- 75% less cost compared to On-Demand hourly rate

- When purchasing a reservation, the customer specifies the instance type and Availability Zone

- Capacity in the data centers is reserved for that customer.

- 2 factors determining the cost:

- Term commitment - the duration of the reservation

- Payment option

- All Upfront —Pay for the entire reservation up front. There is no monthly charge for the customer during the term.

- Partial Upfront —Pay a portion of the reservation charge up front and the rest in monthly installments for the duration of the term.

- No Upfront —Pay the entire reservation charge in monthly installments for the duration of the term.

- Modifying Reservation: You can modify your whole reservation, or just a subset, in one or more of the following ways:

- Switch Availability Zones within the same region.

- Change between EC2-VPC and EC2-Classic.

- Change the instance type within the same instance family (Linux instances only).

- One may purchase two Reserved Instances to handle the average traffic, but depend on On-Demand Instances to fulfill compute needs during the peak times.

- Spot Instances

- offers the greatest discount

- For workloads that are not time critical and are tolerant of interruption

- With Spot Instances, when the customer’s bid price is above the current Spot price, the customer will receive the requested instance(s). These instances will operate like all other EC2 instances, and the customer will only pay the Spot price for the hours that instance(s) run.

- The instances will run until:

- The customer terminates them.

- The Spot price goes above the customer’s bid price.

- There is not enough unused capacity to meet the demand for Spot Instances.

- If EC2 needs to terminate a Spot Instance, the instance will receive a termination notice providing a two-minute warning prior to EC2 terminating the instance.

Tenancy options

- Shared Tenancy

- a single host machine may house instances from different customers

- Default model

- AWS does not use overprovisioning and fully isolates instances from other instances on the same host, this is a secure tenancy model.

- Dedicated Instance

- runs on hardware that’s dedicated to a single customer.

- Dedicated Host

- Dedicated Host is a physical server with EC2 instance capacity fully dedicated to a single customer’s use.

- The customer has complete control over which specific host runs an instance at launch. This differs from Dedicated Instances in that a Dedicated Instance can launch on any hardware that has been dedicated to the account.

- can help address licensing requirements and reduce costs by allowing to use existing server-bound software licenses.

- Placement Groups

- is a logical grouping of instances within a single Availability Zone.

- enable applications to participate in a low-latency, 10 Gbps network.

- recommended for applications that benefit from low network latency, high network throughput, or both. This represents network connectivity between instances.

- To fully use this network performance for your placement group, choose an instance type that supports enhanced networking and 10 Gbps network performance.

Instance Stores

- An instance store provides temporary block-level storage (ephemeral storage)

- Data in the instance store is lost when:

- the underlying disk drive fails

- the instance stops

- the instance terminates

AWS Lambda

- a zero-administration compute platform for back-end web developers that runs your code for you on the AWS cloud (for high availability)

Auto Scaling

-

allows to scale EC2 capacity up or down automatically according to conditions defined for the particular workload (for scaling in and out)

- Advantage of deploying applications to the cloud is the ability to launch and then release servers in response to variable workloads.

-

Provisioning servers on demand and then releasing when not needed can provide significant cost savings. E.g., an end-of-month data-input system, a retail shopping site supporting flash sales, etc.

- Embrace the Spike

- Many web applications have unplanned load increases based on events outside of your control.

- Setting up Auto Scaling in advance will allow to embrace and survive such fast increase in the number of requests. It will scale up your site to meet the increased demand and then scale down when the event subsides.

Auto Scaling Plans

- Maintain Current Instance Levels

- Configure your Auto Scaling group to maintain a minimum or specified number of running instances at all times.

- To maintain the current instance levels, Auto Scaling performs a periodic health check on running instances within an Auto Scaling group.

- When Auto Scaling finds an unhealthy instance, it terminates that instance and launches a new one.

- Steady state workloads that need a consistent number of EC2 instances at all times can use Auto Scaling to monitor and keep that specific number of EC2 instances running.

- Manual Scaling

- This is the most basic way to scale your resources. You just need to specify the change in the maximum, minimum, or desired capacity of your Auto Scaling group.

- Auto Scaling manages the process of creating or terminating instances to maintain the updated capacity.

- Manual scaling out can be very useful to increase resources for an infrequent event, such as movie release dates.

- For extremely large-scale events, even the ELB load balancers can be pre-warmed by working with your local solutions architect or AWS Support.

- Scheduled Scaling

- When you have a recurring schedule or a predictable schedule of when you will need to increase or decrease the number of instances in your group, e.g., end-of-month, or end-of-year processing, schedule scaling is useful.

- Scaling actions are performed automatically as a function of time and date.

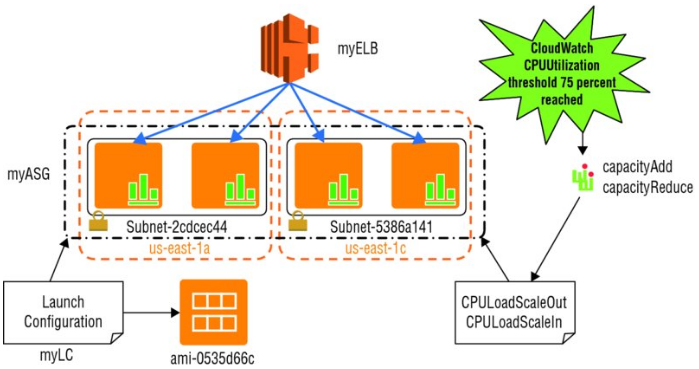

- Dynamic Scaling

- Lets you define parameters that control the Auto Scaling process in a scaling policy. E.g., create a policy that adds more EC2 instances to the web tier when the network bandwidth, measured by CloudWatch, reaches a certain threshold.

Auto Scaling Components

Launch Configuration

- A launch configuration is the template used to create new instances, and it has the following:

- Name (e.g., myLC)

- AMI (e.g., ami-0535d66c)

- Instance type (e.g., m3.medium)

- Security groups (e.g., sg-f57cde9d)

- Instance key pair (e.g., myKeyPair)

- Each Auto Scaling group can have only one launch configuration at a time.

- Security groups for instances launched in EC2-Classic may be referenced by security group name or by security group IDs. Security group ID is recommended.

- Limits

- Default limit for launch configurations = 100 per region. If you exceed this limit, the call to create-launch-configuration will fail. To update this limit:

aws autoscaling describe-account-limits - Auto Scaling may cause you to reach limits of other services, such as the default number of EC2 instances you can currently launch = 20 per region.

- When you run a command using the CLI and it fails,

- check your syntax first. If that checks out,

- verify the limits for the command you are attempting, and check to see that you have not exceeded a limit.

- To raise the limits, in cases allowed, create a support case at the AWS Support Center online and then choose Service Limit Increase under Regarding.

- Default limit for launch configurations = 100 per region. If you exceed this limit, the call to create-launch-configuration will fail. To update this limit:

Auto Scaling Group

- An Auto Scaling group is a collection of EC2 instances managed by the Auto Scaling service.

- Each Auto Scaling group contains configuration options that control when Auto Scaling should launch new instances and terminate existing instances.

- An Auto Scaling group must contain

- a name

- a minimum and maximum number of instances that can be in the group.

- (optional) desired capacity, which is the number of instances that the group must have at all times. If not specified, the default desired capacity = the minimum number of instances.

1 2 3 4 5 6 7 | |

- An Auto Scaling group can use either On-Demand (default) or Spot instances, but not both.

- Spot Instances

- Bid price can be modified

- If instances are available at or below your bid price, they will be launched in your Auto Scaling group.

Spot On!

- Spot Instances can be useful when hosting sites to provide additional compute capacity but are price constrained. E.g., a “freemium” site model where some basic functionality to users are free and additional functionality is for premium users.

- It can be used for providing the basic functionality when available by referencing a maximum bid price in the launch configuration (

—spot-price "0.15") associated with the Auto Scaling group.

Scaling Policy

- CloudWatch alarms and scaling policies can be associated with an Auto Scaling group to adjust Auto Scaling dynamically.

- When a threshold is crossed, CloudWatch sends alarms to trigger changes (scaling in or out) to the number of EC2 instances currently receiving traffic behind a load balancer.

- After the CloudWatch alarm sends a message to the Auto Scaling group, Auto Scaling executes the associated policy to scale your group.

- The policy is a set of instructions that tells Auto Scaling whether to scale out, launching new EC2 instances referenced in the associated launch configuration, or to scale in and terminate instances.

- Various ways to configure a scaling policy:

- increase or decrease by a specific number of instances, or

- adjust based on a percentage.

- scale by steps and increase or decrease the current capacity of the group based on a set of scaling adjustments that vary based on the size of the alarm threshold trigger.

-

More than one scaling policy can be associated with an Auto Scaling group. E.g., One policy to scale out if CPU Load > 75% for 2 minutes. Another policy to scale in if CPU Load < 40% for 20 minutes.

- Best Practice

- Scale out quickly and scale in slowly so you can respond to bursts or spikes but avoid inadvertently terminating EC2 instances too quickly, only having to launch more EC2 instances if the burst is sustained.

- Auto Scaling also supports a cooldown period, which is a configurable setting that determines when to suspend scaling activities for a short time for an Auto Scaling group.

- If you start an EC2 instance, you will be billed for one full hour of running time. Partial instance hours consumed are billed as full hours. This means that if you have a permissive scaling policy that launches, terminates, and re-launches many instances an hour, you are billing a full hour for each and every instance you launch, even if you terminate some of those instances in less than hour.

- Bootstrapping new EC2 instances launched using Auto Scaling takes time to configure before the instance is healthy and capable of accepting traffic. Instances that start and are available for load faster can join the capacity pool more quickly.

- Stateless instances can enter and exit gracefully than a stateful instance in an Auto Scaling group.

- Rolling Out a Patch at Scale

- In large deployments of EC2 instances, Auto Scaling can be used to make rolling out a patch to your instances easy.

- The launch configuration associated with the Auto Scaling group may be modified to reference a new AMI and even a new EC2 instance if needed. Then you can deregister or terminate instances one at a time or in small groups, and the new EC2 instances will reference the new patched AMI.

Elastic Load Balancing (ELB)

-

automatically distributes incoming application traffic across multiple EC2 instances. (for fault-tolerance)

- ELB allows to distribute traffic across a group of EC2 instances in one or more Availability Zones, to achieve high availability in your applications.

- ELB supports routing and load balancing of HTTP, HTTPS, TCP, and SSL traffic to EC2 instances.

- ELB provides a stable, single Canonical Name record (CNAME) entry point for DNS configuration and supports both Internet-facing and internal application-facing load balancers.

- ELB supports health checks for EC2 instances to ensure traffic is not routed to unhealthy or failing instances. It can scale automatically based on collected metrics.

- Long-running applications will eventually need to be maintained and updated with a newer version of the application. When using EC2 instances running behind an ELB load balancer, you may deregister these long-running EC2 instances associated with a load balancer manually and then register newly launched EC2 instances that you have started with the new updates installed.

- Advantages of ELB

- Because ELB is a managed service, it scales in and out automatically to meet the demands of increased application traffic and is highly available within a region itself as a service.

- ELB helps you achieve high availability for your applications by distributing traffic across healthy instances in multiple Availability Zones.

- ELB seamlessly integrates with the Auto Scaling service to automatically scale the EC2 instances behind the load balancer.

- ELB is secure, working with VPC to route traffic internally between application tiers, allowing you to expose only Internet-facing public IP addresses.

- ELB also supports integrated certificate management and SSL termination.

Types of Load Balancers

Internet-Facing Load Balancers

- Takes requests from clients over the Internet and distributes them to EC2 instances that are registered with the load balancer.

- When you configure a load balancer, it receives a public DNS name that clients can use to send requests to your application. The DNS servers resolve the DNS name to your load balancer’s public IP address, which can be visible to client applications.

- Because ELB scales in and out to meet traffic demand, it is not recommended to bind an application to an IP address that may no longer be part of a load balancer’s pool of resources.

- Best practice: always refer a load balancer by its DNS name, instead of by the IP address, in order to provide a single, stable entry point.

- ELB in VPC supports IPv4 addresses only.

- ELB in EC2-Classic supports both IPv4 and IPv6 addresses.

Internal Load Balancers

- In a multi-tier application, it is often useful to load balance between the tiers of the application. For example, an Internet-facing load balancer might receive and balance external traffic to the presentation or web tier whose EC2 instances then send its requests to a load balancer sitting in front of the application tier.

- Internal load balancers can be used to route traffic to EC2 instances in VPCs with private subnets.

HTTPS Load Balancers

- Load balancer that uses the SSL/TLS protocol for encrypted connections (also known as SSL offload).

- This feature enables traffic encryption between your load balancer and the clients that initiate HTTPS sessions, and for connections between your load balancer and your back-end instances.

- Elastic Load Balancing provides security policies that have predefined SSL negotiation configurations to use to negotiate connections between clients and the load balancer. In order to use SSL, you must install an SSL certificate on the load balancer that it uses to terminate the connection and then decrypt requests from clients before sending requests to the back-end EC2 instances.

- You can optionally choose to enable authentication on your back-end instances.

- ELB does not support Server Name Indication (SNI) on your load balancer. This means that if you want to host multiple websites on a fleet of EC2 instances behind ELB with a single SSL certificate, you will need to add a Subject Alternative Name (SAN) for each website to the certificate to avoid site users seeing a warning message when the site is accessed.

Listeners

- A listener is a process that checks for connection requests—for example, a CNAME configured to the A record name of the load balancer.

- Every load balancer must have one or more listeners configured.

- Every listener is configured with

- a protocol and a port (client to load balancer) for a front-end connection

- a protocol and a port for the back-end (load balancer to EC2 instance) connection.

- ELB supports protocols operating at two different Open System Interconnection (OSI) layers.

- In the OSI model, Layer 4 is the transport layer that describes the TCP connection between the client and your back-end instance through the load balancer. Layer 4 is the lowest level that is configurable for your load balancer.

- Layer 7 is the application layer that describes the use of HTTP and HTTPS connections from clients to the load balancer and from the load balancer to your back-end instance.

ELB Configurations

Idle Connection Timeout

- For each request that a client makes through a load balancer, the load balancer maintains two connections.

- Connection 1: with the client

- Connection 2: to the back-end instance

- For each connection, the load balancer manages an idle timeout that is triggered when no data is sent over the connection for a specified time period. After the idle timeout period has elapsed, if no data has been sent or received, the load balancer closes the connection.

- Default idle timeout for both connections = 60 seconds. This can be modified.

- If an HTTP request doesn’t complete within the idle timeout period, the load balancer closes the connection, even if data is still being transferred.

- Keep-alive

- If you use HTTP and HTTPS listeners, we recommend that you enable the keep-alive option for your EC2 instances. You can enable keep-alive in your web server settings or in the kernel settings for your EC2 instances.

- Keep-alive, when enabled, allows the load balancer to reuse connections to your back-end instance, which reduces CPU utilization.

- To ensure that the load balancer is responsible for closing the connections to your back-end instance, make sure that the value you set for the keep-alive time is greater than the idle timeout setting on your load balancer.

Cross-Zone Load Balancing

- To ensure that request traffic is routed evenly across all back-end instances for your load balancer, regardless of the Availability Zone in which they are located, you should enable cross-zone load balancing on your load balancer.

- Cross-zone load balancing reduces the need to maintain equivalent numbers of back-end instances in each Availability Zone and improves your application’s ability to handle the loss of one or more back-end instances.

- However, it is still recommended that you maintain approximately equivalent numbers of instances in each Availability Zone for higher fault tolerance.

- For environments where clients cache DNS lookups, incoming requests might favor one of the Availability Zones. Using cross-zone load balancing, this imbalance in the request load is spread across all available back-end instances in the region, reducing the impact of misconfigured clients.

Connection Draining

- To ensure that the load balancer stops sending requests to instances that are deregistering or unhealthy, while keeping the existing connections open. This enables the load balancer to complete in-flight requests made to these instances.

- When you enable connection draining, you can specify a maximum time for the load balancer to keep connections alive before reporting the instance as deregistered.

- The maximum timeout value can be set between 1 and 3,600 seconds (the default is 300 seconds).

- When the maximum time limit is reached, the load balancer forcibly closes connections to the deregistering instance.

Proxy Protocol

- When you use TCP or SSL for both front-end and back-end connections, your load balancer forwards requests to the back-end instances without modifying the request headers.

- If you enable Proxy Protocol, a human-readable header is added to the request header with connection information such as the source IP address, destination IP address, and port numbers. The header is then sent to the back-end instance as part of the request.

- Before using Proxy Protocol, verify that your load balancer is not behind a proxy server with Proxy Protocol enabled. If Proxy Protocol is enabled on both the proxy server and the load balancer, the load balancer adds another header to the request, which already has a header from the proxy server. Depending on how your back-end instance is configured, this duplication might result in errors.

Sticky Sessions

- By default, a load balancer routes each request independently to the registered instance with the smallest load. However, you can use the sticky session feature (also known as session affinity), which enables the load balancer to bind a user’s session to a specific instance. This ensures that all requests from the user during the session are sent to the same instance.

- The key to managing sticky sessions is to determine how long your load balancer should consistently route the user’s request to the same instance. If your application has its own session cookie, you can configure ELB so that the session cookie follows the duration specified by the application’s session cookie.

- If your application does not have its own session cookie, you can configure ELB to create a session cookie by specifying your own stickiness duration.

- ELB creates a cookie named AWSELB that is used to map the session to the instance.

Health Checks

- ELB supports health checks to test the status of the EC2 instances behind an ELB load balancer.

- If healthy, status = InService, else OutOfService.

- The load balancer performs health checks on all registered instances to determine whether the instance is in a healthy state or an unhealthy state.

- A health check is a ping, a connection attempt, or a page that is checked periodically.

- You can set the time interval between health checks and also the amount of time to wait to respond in case the health check page includes a computational aspect.

- Finally, you can set a threshold for the number of consecutive health check failures before an instance is marked as unhealthy.

AWS Elastic Beanstalk

- AWS Elastic Beanstalk is the fastest and simplest way to get an application up and running on AWS.

- Developers can simply upload their application code, and the service automatically handles all the details, such as resource provisioning, load balancing, Auto scaling, and monitoring.

- With AWS Elastic Beanstalk, you can quickly deploy and manage applications on the AWS cloud without worrying about the infrastructure that runs those applications. * An AWS Elastic Beanstalk application is the logical collection of these AWS Elastic Beanstalk components, which includes environments, versions, and environment configurations.

- In AWS Elastic Beanstalk, an application is conceptually similar to a folder.

- Application Version

- An application version refers to a specific, labeled iteration of deployable code for a web application.

- An application version points to an S3 object that contains the deployable code.

- Applications can have many versions and each application version is unique.

- In a running environment, organizations can deploy any application version they already uploaded to the application, or they can upload and immediately deploy a new application version.

- Organizations might upload multiple application versions to test differences between one version of their web application and another.

- Environment

- An environment is an application version that is deployed onto AWS resources.

- Each environment runs only a single application version at a time; however, the same version or different versions can run in as many environments at the same time as needed.

- When an environment is created, AWS Elastic Beanstalk provisions the resources needed to run the application version that is specified.

- Environment Configuration

- An environment configuration identifies a collection of parameters and settings that define how an environment and its associated resources behave.

- When an environment’s configuration settings are updated, AWS Elastic Beanstalk automatically applies the changes to existing resources or deletes and deploys new resources depending on the type of change.

- When an AWS Elastic Beanstalk environment is launched, the environment tier, platform, and environment type are specified.

- Environment tier : that determines whether AWS Elastic Beanstalk provisions resources to support a web application that handles HTTP(S) requests (web server tier) or an application that handles background-processing tasks (worker tier).

- Elastic Beanstalk provides platform support for the programming languages Java, Node.js, PHP, Python, Ruby, and Go with support for the web containers Tomcat, Passenger, Puma, and Docker.

Use Cases

A company provides a website for prospective home buyers, sellers, and renters to browse home and apartment listings for more than 110 million homes. The website processes more than three million new images daily. It receives more than 17,000 image requests per second on its website during peak traffic from both desktop and mobile clients. The company was looking for ways to be more agile with deployments and empower its developers to focus more on writing code instead of spending time managing and configuring servers, databases, load balancers, firewalls, and networks. It began using AWS Elastic Beanstalk as the service for deploying and scaling the web applications and services. Developers were empowered to upload code to AWS Elastic Beanstalk, which then automatically handled the deployment, from capacity provisioning, load balancing, and Auto Scaling, to application health monitoring. Because the company ingests data in a haphazard way, running feeds that dump a ton of work into the image processing system all at once, it needs to scale up its image converter fleet to meet peak demand. The company determined that an AWS Elastic Beanstalk worker fleet to run a Python Imaging Library with custom code was the simplest way to meet the requirement. This eliminated the need to have a number of static instances or, worse, trying to write their own Auto Scaling configuration. By making the move to AWS Elastic Beanstalk, the company was able to reduce operating costs while increasing agility and scalability for its image processing and delivery system.

Key Features

- Additionally, developers retain full control over the AWS resources powering their application and can perform a variety of functions by simply adjusting the configuration settings such as:

- Selecting the EC2 instance type

- Choosing the database and storage options

- Enabling login access to EC2 instances

- Enhancing application security by enabling HTTPS protocol on the load balancer

- Adjusting application server settings (for example, JVM settings) and passing environment variables

- Adjust Auto Scaling settings to control the metrics and thresholds used to determine when to add or remove instances from an environment

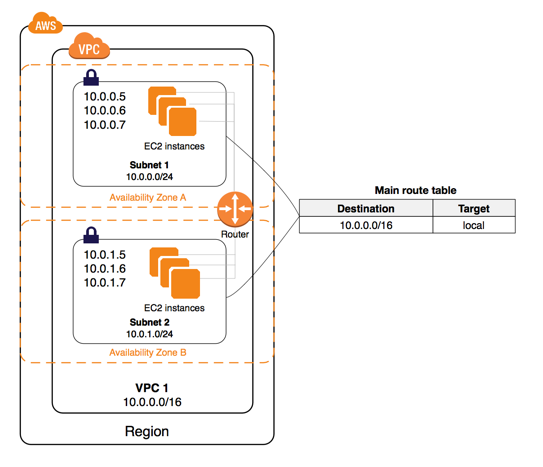

AWS Virtual Private Cloud (VPC)

- VPC is a custom-defined virtual network within AWS cloud

- VPC is the networking layer for EC2

- 2 networking platforms in AWS

- EC2-Classic (old) - single, flat n/w shared with other customers

- EC2-VPC

- VPC consists of

- Subnets

- Route tables

- DHCP option sets

- Security groups

- Network ACLs

- Internet Gateways (optional)

- EIP addresses (optional)

- Elastic Network Interfaces ENIs (optional)

- Endpoints (optional)

- Peering (optional)

- NAT instances and NAT gateways (optional)

- Virtual Private Gateways (VPGs), Customer Gateways (CGWs), VPNs

- (optional)

- VPC allows to

- select your own IP address range

- create your own subnets

- configure your own route tables, n/w gateways and security settings

- Within a region, you can create multiple VPCs. Each VPC is logically isolated even if it shared its IP address space

- VPC can span across Availability Zones

- To create a VPC, choose CIDR block

- which cannot be changed after creation

- As large as /16 (65,636 addresses) or as small as /28 (16 addresses).

- Should not overlap

- Every account has a default VPC created in each region with a default subnet created in each Availability Zone.

- The assigned CIDR block of the VPC will be 172.31.0.0/16

- Default VPCs contain one public subnet in every Availability Zone with the region, with a netmask of /20

- Orgs can extend their corporate data center n/w to AWS by using h/w or s/w VPN connections or dedicated circuits by using AWS Direct Connect.

Subnets

- A subnet is a segment of VPC’s IP address range where you can launch EC2 instances, RDS databases, etc.

- CIDR blocks defines subnets

- Smallest subnet you can create is a /28 (16 addresses).

- AWS reserves the first 4 addresses and the last IP address of every subnet for internal networking purposes. So,

16-4-1 = 11IP addresses is the smallest subnet.

- AWS reserves the first 4 addresses and the last IP address of every subnet for internal networking purposes. So,

- Subnets reside within one Availability Zone. CANNOT span across zones or regions.

- One Availability Zone can have multiple subnets

- Internal IP address range of the subnet is always private

- Subnets can be classified as one of the below

- Public : route table directs the subnet’s traffic to VPC’s IGW

- Private: route table DOES NOT direct the traffic to VPC’s IGW

- VPN-only: route table DOES NOT direct the traffic to VPC’s IGW, but to the VPC’s VPG

Route Tables

- contains a set of rules called rules that are applied to subnets to determine where the network traffic is directed

- allows EC2 instances in different subnets within a VPC to communicate with each other

- Route tables can be used to specify

- which subnets are public (by directing Internet traffic to IGW)

- which subnets are private (by not having a route that directs to IGW)

- Each route table

- has a default route called the local route, which enables communication within the VPC. This route cannot be modified or removed.

- custom routes can be added to exit the VPC via IGW, VPG or the NAT instance

- Each VPC comes with a main router table that can be modified.

- Custom route tables can be added, and subsequent new subnets created will be automatically associated with this custom route table

- Each subnet must be associated with a route table

- Each route in a table specifies a destination CIDR.

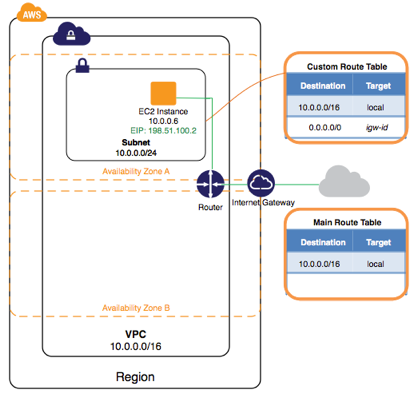

IGW (Internet Gateways)

- IGW allows communication between instances in VPC and the Internet.

- IGW is horizontally scaled, redundant, and highly available VPC component

- To route traffic subnets to Internet, route tables must have a route targeting the IGW

- To enable an EC2 instance to send/receive traffic from the Internet, assign a public IP address or EIP address

- IGW maintains the 1-to-1 map of the EC2 instance’s private IP and public IP.

- EC2 instances within a VPC are only aware of their private IPs. When traffic is sent from the instance to the Internet, the IGW translates the reply address to the public IP address of the instance.

- To create a public subnet with Internet access

- Attach an IGW to your VPC

- Create a subnet route table

- rule to route all non-local traffic (0.0.0.0/0) to the IGW

- (optional) rule to route all destinations not explicitly defined in the route table to IGW

- Configure your n/w ACLs and security group rules to allow relevant traffic flow to and from your instance

DHCP

- DHCP - a standard for passing configuration information to hosts on a TCP/IP n/w

- Following options can be configured within a DHCP message:

- DNS servers - IP addresses of up to 4 DNS servers.

- Domain name - e.g., mycompany.com

- ntp-servers - IP addresses of up to 4 NTP (Network Time Protocol) servers

- netbios-name-servers - IP addresses of up to 4 NetBIOS name servers

- netbios-node-type - set this to 2

- Upon a VPC creation, AWS automatically associates a DHCP option set to it, and sets 2 options

- DNS servers (defaulted to AmazonProvidedDNS. This option enables DNS for instances that need to communicate over the VPC’s IGW)

- Domain name (defaulted to domain name of your region)

- To assign your own domain name to your instances, create a custom DHCP option set and assign it to your VPC

- Each VPC must have only one DHCP option set assigned to it.

EIPs (Elastic IP Addresses)

- An Elastic IP Addresses (EIP) is a static, public IP address in the pool for the region that you can allocate to your account (pull from the pool) and release (return to the pool).

- EIPs allow you to maintain a set of IP addresses that remain fixed while the underlying infrastructure may change over time.

- You must first allocate an EIP for use within a VPC and then assign it to an instance.

- EIPs are specific to a region (that is, an EIP in one region cannot be assigned to an instance within an VPC in a different region).

- There is a one-to-one relationship between network interfaces and EIPs.

- You can move EIPs from one instance to another, either in the same VPC or a different VPC within the same region.

- EIPs remain associated with your AWS account until you explicitly release them.

- There are charges for EIPs allocated to your account, even when they are not associated with a resource.

ENIs (Elastic Network Interfaces)

- An ENI is a virtual network interface that you can attach to an instance in an VPC.

- ENIs are only available within an VPC, and they are associated with a subnet upon creation.

- They can have 1 public IP address, 1 primary private IP and multiple non-primary private IPs.

- Assigning a second network interface to an instance via an ENI allows it to be dual-homed (have network presence in different subnets).

- An ENI created independently of a particular instance persists regardless of the lifetime of any instance to which it is attached; if an underlying instance fails, the IP address may be preserved by attaching the ENI to a replacement instance.

Endpoints

- An VPC endpoint enables you to create a private connection between your VPC and another AWS service without requiring access over the Internet or through a NAT instance, VPN connection, or AWS Direct Connect.

- Multiple endpoints can be created for a single service, and use different route tables to enforce different access policies from different subnets to the same service.

- To create an VPC endpoint:

- Specify the VPC.

- Specify the service. A service is identified by a prefix list of the form

com.amazonaws.<region>.<service>. - Specify the policy. You can allow full access or create a custom policy. This policy can be changed at any time.